Symbolic Python¶

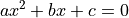

In standard mathematics we routinely write down abstract variables or concepts and manipulate them without ever assigning specific values to them. An example would be the quadratic equation

and its roots  : we can write down the solutions of the equation and discuss the existence, within the real numbers, of the roots, without specifying the particular values of the parameters

: we can write down the solutions of the equation and discuss the existence, within the real numbers, of the roots, without specifying the particular values of the parameters  and

and  .

.

In a standard computer programming language, we can write functions that encapsulate the solutions of the equation, but calling those functions requires us to specify values of the parameters. In general, the value of a variable must be given before the variable can be used.

However, there do exist Computer Algebra Systems that can perform manipulations in the “standard” mathematical form. Through the university you will have access to Wolfram Mathematica and Maple, which are commercial packages providing a huge range of mathematical tools. There are also freely available packages, such as SageMath and sympy. These are not always easy to use, as all CAS have their own formal languages that rarely perfectly match your expectations.

Here we will briefly look at sympy, which is a pure Python CAS. sympy is not suitable for complex calculations, as it’s far slower than the alternatives. However, it does interface very cleanly with Python, so can be used inside Python code, especially to avoid entering lengthy expressions.

sympy¶

Setting up¶

Setting up sympy is straightforward:

[1]:

import sympy

sympy.init_printing()

The standard import command is used. The init_printing command looks at your system to find the clearest way of displaying the output; this isn’t necessary, but is helpful for understanding the results.

To do anything in sympy we have to explicitly tell it if something is a variable, and what name it has. There are two commands that do this. To declare a single variable, use

[2]:

x = sympy.Symbol('x')

To declare multiple variables at once, use

[3]:

y, z0 = sympy.symbols(('y', 'z_0'))

Note that the “name” of the variable does not need to match the symbol with which it is displayed. We have used this with z0 above:

[4]:

z0

[4]:

Once we have variables, we can define new variables by operating on old ones:

[5]:

a = x + y

b = y * z0

print("a={}. b={}.".format(a, b))

a=x + y. b=y*z_0.

[6]:

a

[6]:

In addition to variables, we can also define general functions. There is only one option for this:

[7]:

f = sympy.Function('f')

In-built functions¶

We have seen already that mathematical functions can be found in different packages. For example, the  function appears in

function appears in math as math.sin, acting on a single number. It also appears in numpy as numpy.sin, where it can act on vectors and arrays in one go. sympy re-implements many mathematical functions, for example as sympy.sin, which can act on abstract (sympy) variables.

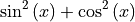

Whenever using sympy we should use sympy functions, as these can be manipulated and simplified. For example:

[8]:

c = sympy.sin(x)**2 + sympy.cos(x)**2

[9]:

c

[9]:

[10]:

c.simplify()

[10]:

Note the steps taken here. c is an object, something that sympy has created. Once created it can be manipulated and simplified, using the methods on the object. It is useful to use tab completion to look at the available commands. For example,

[11]:

d = sympy.cosh(x)**2 - sympy.sinh(x)**2

Now type d. and then tab, to inspect all the available methods. As before, we could do

[12]:

d.simplify()

[12]:

but there are many other options.

Solving equations¶

Let us go back to our quadratic equation and check the solution. To define an equation we use the sympy.Eq function:

[13]:

a, b, c, x = sympy.symbols(('a', 'b', 'c', 'x'))

quadratic_equation = sympy.Eq(a*x**2+b*x+c, 0)

sympy.solve(quadratic_equation)

[13]:

![$$\left [ \left \{ a : - \frac{1}{x^{2}} \left(b x + c\right)\right \}\right ]$$](_images/math/0f009c54874c33ed6f32addbfae659f381de2bd4.png)

What happened here? sympy is not smart enough to know that we wanted to solve for x! Instead, it solved for the first variable it encountered. Let us try again:

[14]:

sympy.solve(quadratic_equation, x)

[14]:

![$$\left [ \frac{1}{2 a} \left(- b + \sqrt{- 4 a c + b^{2}}\right), \quad - \frac{1}{2 a} \left(b + \sqrt{- 4 a c + b^{2}}\right)\right ]$$](_images/math/d9c0d5c191c06b2fc06919155498bf1e1e6a20be.png)

This is our expectation: multiple solutions, returned as a list. We can access and manipulate these results:

[15]:

roots = sympy.solve(quadratic_equation, x)

xplus, xminus = sympy.symbols(('x_{+}', 'x_{-}'))

xplus = roots[0]

xminus = roots[1]

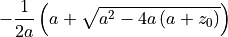

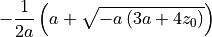

We can substitute in specific values for the parameters to find solutions:

[16]:

xplus_solution = xplus.subs([(a,1), (b,2), (c,3)])

xplus_solution

[16]:

We have a list of substitutions. Each substitution is given by a tuple, containing the variable to be replaced, and the expression replacing it. We do not have to substitute in numbers, as here, but could use other variables:

[17]:

xminus_solution = xminus.subs([(b,a), (c,a+z0)])

xminus_solution

[17]:

[18]:

xminus_solution.simplify()

[18]:

We can use similar syntax to solve systems of equations, such as

[19]:

eq1 = sympy.Eq(x+2*y, 0)

eq2 = sympy.Eq(x*y, z0)

sympy.solve([eq1, eq2], [x, y])

[19]:

![$$\left [ \left ( - \sqrt{2} \sqrt{- z_{0}}, \quad \frac{\sqrt{2}}{2} \sqrt{- z_{0}}\right ), \quad \left ( \sqrt{2} \sqrt{- z_{0}}, \quad - \frac{\sqrt{2}}{2} \sqrt{- z_{0}}\right )\right ]$$](_images/math/400ae378a94bec2f1445c40c3b01478ef08a09d4.png)

Differentiation and integration¶

Differentiation¶

There is a standard function for differentiation, diff:

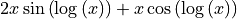

[20]:

expression = x**2*sympy.sin(sympy.log(x))

sympy.diff(expression, x)

[20]:

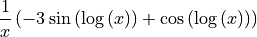

A parameter can control how many times to differentiate:

[21]:

sympy.diff(expression, x, 3)

[21]:

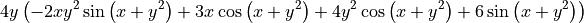

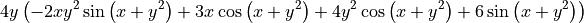

Partial differentiation with respect to multiple variables can also be performed by increasing the number of arguments:

[22]:

expression2 = x*sympy.cos(y**2 + x)

sympy.diff(expression2, x, 2, y, 3)

[22]:

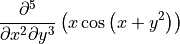

There is also a function representing an unevaluated derivative:

[23]:

sympy.Derivative(expression2, x, 2, y, 3)

[23]:

These can be useful for display, building up a calculation in stages, simplification, or when the derivative cannot be evaluated. It can be explicitly evaluated using the doit function:

[24]:

sympy.Derivative(expression2, x, 2, y, 3).doit()

[24]:

Integration¶

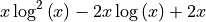

Integration uses the integrate function. This can calculate either definite or indefinite integrals, but will not include the integration constant.

[25]:

integrand=sympy.log(x)**2

sympy.integrate(integrand, x)

[25]:

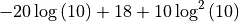

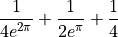

[26]:

sympy.integrate(integrand, (x, 1, 10))

[26]:

The definite integral is specified by passing a tuple, with the variable to be integrated (here x) and the lower and upper limits (which can be expressions).

Note that sympy includes an “infinity” object oo (two o’s), which can be used in the limits of integration:

[27]:

sympy.integrate(sympy.exp(-x), (x, 0, sympy.oo))

[27]:

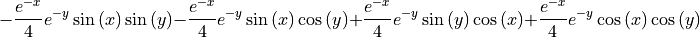

Multiple integration for higher dimensional integrals can be performed:

[28]:

sympy.integrate(sympy.exp(-(x+y))*sympy.cos(x)*sympy.sin(y), x, y)

[28]:

[29]:

sympy.integrate(sympy.exp(-(x+y))*sympy.cos(x)*sympy.sin(y),

(x, 0, sympy.pi), (y, 0, sympy.pi))

[29]:

Again, there is an unevaluated integral:

[30]:

sympy.Integral(integrand, x)

[30]:

[31]:

sympy.Integral(integrand, (x, 1, 10))

[31]:

Again, the doit method will explicitly evaluate the result where possible.

Differential equations¶

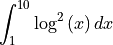

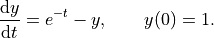

Defining and solving differential equations uses the pattern from the previous sections. We’ll use the same example problem as in the scipy case,

First we define that  is a function, currently unknown, and

is a function, currently unknown, and  is a variable.

is a variable.

[32]:

y = sympy.Function('y')

t = sympy.Symbol('t')

y is a general function, and can be a function of anything at this point (any number of variables with any name). To use it consistently, we must refer to it explicitly as a function of  everywhere. For example,

everywhere. For example,

[33]:

y(t)

[33]:

We then define the differential equation. sympy.Eq defines the equation, and diff differentiates:

[34]:

ode = sympy.Eq(y(t).diff(t), sympy.exp(-t) - y(t))

ode

[34]:

Here we have used diff as a method applied to the function. As sympy can’t differentiate  (as it doesn’t have an explicit value), it leaves it unevaluated.

(as it doesn’t have an explicit value), it leaves it unevaluated.

We can now use the dsolve function to get the solution to the ODE. The syntax is very similar to the solve function used above:

[35]:

sympy.dsolve(ode, y(t))

[35]:

This is simple enough to solve, but we’ll use symbolic methods to find the constant, by setting  and

and  .

.

[36]:

general_solution = sympy.dsolve(ode, y(t))

value = general_solution.subs([(t,0), (y(0), 1)])

value

[36]:

We then find the specific solution of the ODE.

[37]:

ode_solution = general_solution.subs([(value.rhs,value.lhs)])

ode_solution

[37]:

Plotting¶

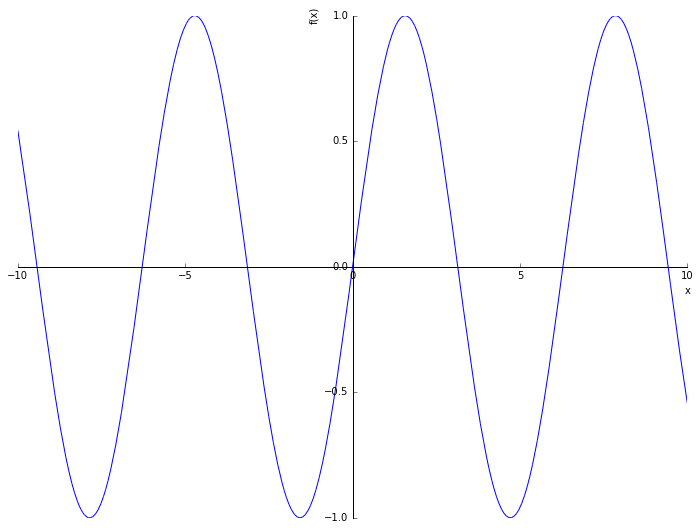

sympy provides an interface to matplotlib so that expressions can be directly plotted. For example,

[38]:

%matplotlib inline

from matplotlib import rcParams

rcParams['figure.figsize']=(12,9)

[39]:

sympy.plot(sympy.sin(x));

We can explicitly set limits, for example

[40]:

sympy.plot(sympy.exp(-x)*sympy.sin(x**2), (x, 0, 1));

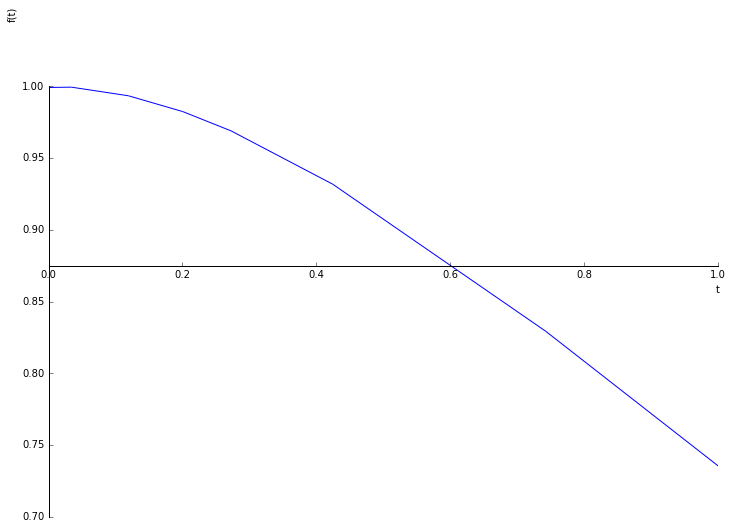

We can plot the solution to the differential equation computed above:

[41]:

sympy.plot(ode_solution.rhs, xlim=(0, 1), ylim=(0.7, 1.05));

This can be visually compared to the previous result. However, we would often like a more precise comparison, which requires numerically evaluating the solution to the ODE at specific points.

lambdify¶

At the end of a symbolic calculation using sympy we will have a result that is often long and complex, and that is needed in another part of another code. We could type the appropriate expression in by hand, but this is tedious and error prone. A better way is to make the computer do it.

The example we use here is the solution to the ODE above. We have solved it symbolically, and the result is straightforward. We can also solve it numerically using scipy. We want to compare the two.

First, let us compute the scipy numerical result:

[42]:

from numpy import exp

from scipy.integrate import odeint

import numpy

def dydt(y, t):

"""

Defining the ODE dy/dt = e^{-t} - y.

Parameters

----------

y : real

The value of y at time t (the current numerical approximation)

t : real

The current time t

Returns

-------

dydt : real

The RHS function defining the ODE.

"""

return exp(-t) - y

t_scipy = numpy.linspace(0.0, 1.0)

y0 = [1.0]

y_scipy = odeint(dydt, y0, t_scipy)

We want to evaluate our sympy solution at the same points as our scipy solution, in order to do a direct comparison. In order to do that, we want to construct a function that computes our sympy solution, without typing it in. That is what lambdify is for: it creates a function from a sympy expression.

First let us get the expression explicitly:

[43]:

ode_expression = ode_solution.rhs

ode_expression

[43]:

Then we construct the function using lambdify:

[44]:

from sympy.utilities.lambdify import lambdify

ode_function = lambdify((t,), ode_expression, modules='numpy')

The first argument to lambdify is a tuple containing the arguments of the function to be created. In this case that’s just t, the time(s) at which we want to evaluate the expression. The second argument to lambdify is the expression that we want converted into a function. The third argument, which is optional, tells lambdify that where possible it should use numpy functions. This means that we call the function using numpy arrays, it will calculate using numpy array

expressions, doing the whole calculation in a single call.

We now have a function that we can directly call:

[45]:

print("sympy solution at t=0: {}".format(ode_function(0.0)))

print("sympy solution at t=0.5: {}".format(ode_function(0.5)))

sympy solution at t=0: 1.0

sympy solution at t=0.5: 0.9097959895689501

And we can directly apply this function to the times at which the scipy solution is constructed, for comparison:

[46]:

y_sympy = ode_function(t_scipy)

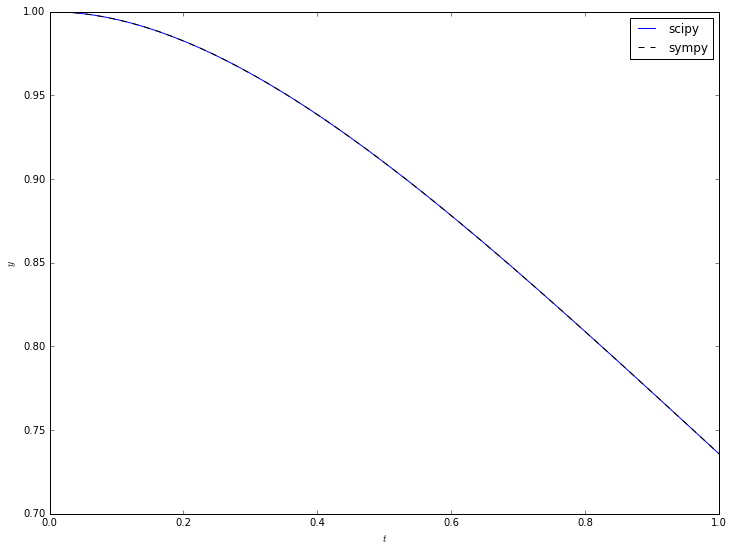

Now we can use matplotlib to plot both on the same figure:

[47]:

from matplotlib import pyplot

pyplot.plot(t_scipy, y_scipy[:,0], 'b-', label='scipy')

pyplot.plot(t_scipy, y_sympy, 'k--', label='sympy')

pyplot.xlabel(r'$t$')

pyplot.ylabel(r'$y$')

pyplot.legend(loc='upper right')

pyplot.show()

We see good visual agreement everywhere. But how accurate is it?

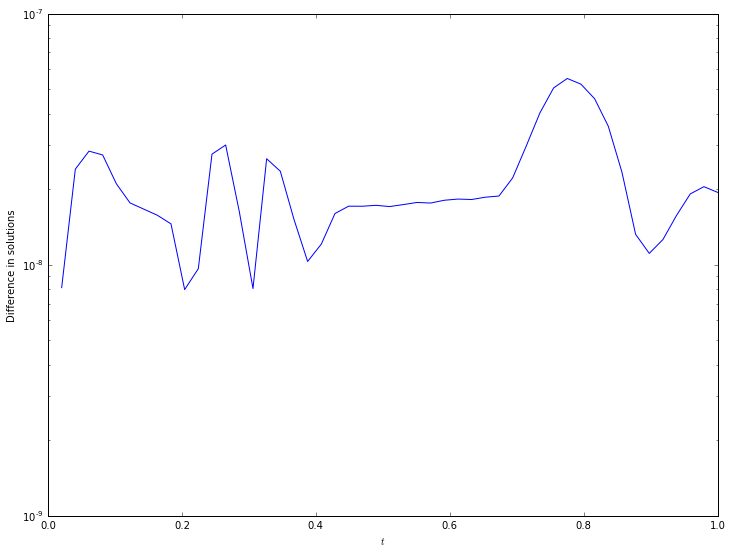

Now that we have numpy arrays explicitly containing the solutions, we can manipulate these to see the differences between solutions:

[48]:

pyplot.semilogy(t_scipy, numpy.abs(y_scipy[:,0]-y_sympy))

pyplot.xlabel(r'$t$')

pyplot.ylabel('Difference in solutions');

The accuracy is around  everywhere - by modifying the accuracy of the

everywhere - by modifying the accuracy of the scipy solver this can be made more accurate (if needed) or less (if the calculation takes too long and high accuracy is not required).

Further reading¶

sympy has detailed documentation and a useful tutorial.

Exercise : systematic ODE solving¶

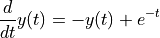

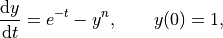

We are interested in the solution of

where  is an integer. The “minor” change from the above examples mean that

is an integer. The “minor” change from the above examples mean that sympy can only give the solution as a power series.

Exercise 1¶

Compute the general solution as a power series for  .

.

Exercise 2¶

Investigate the help for the dsolve function to straightforwardly impose the initial condition  using the

using the ics argument. Using this, compute the specific solutions that satisfy the ODE for  .

.

Exercise 3¶

Using the removeO command, plot each of these solutions for ![t \in [0, 1]](_images/math/493e15ea40ee636e1bb4d4753f39809172e2f652.png) .

.